.svg)

.svg)

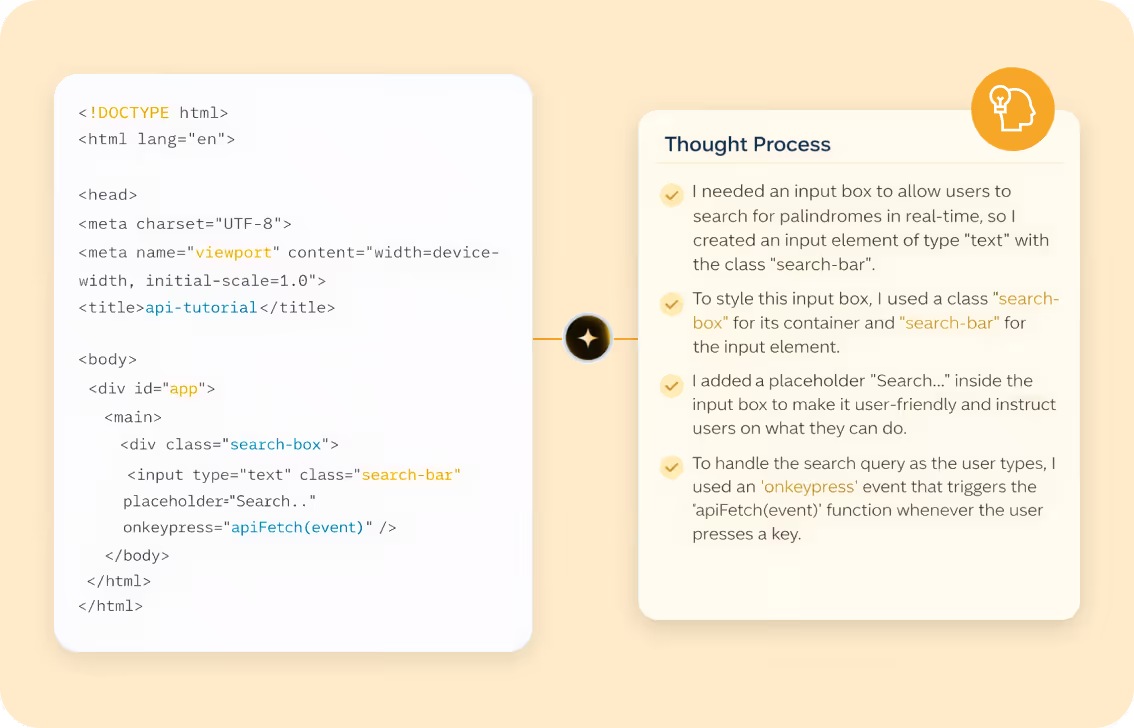

Most Coding Interviews only grade output. Our AI evaluates how candidates think, communicate, and problem-solve in a live technical conversation — revealing strengths and gaps that static challenges can’t detect.

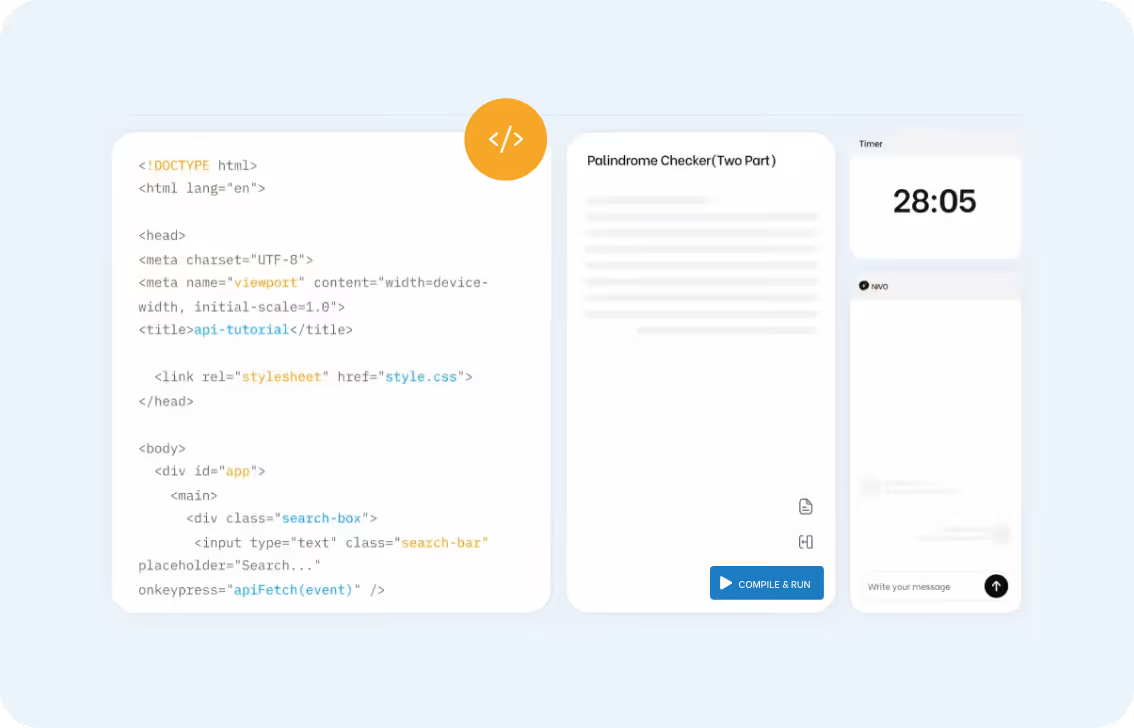

No Downloads Need, Built-in Browser Based Code Editor

Not Just the Final Code

.avif)

Our AI flags patterns that indicate the candidate may not be producing the work themselves, such as: